Chat API with context and streaming

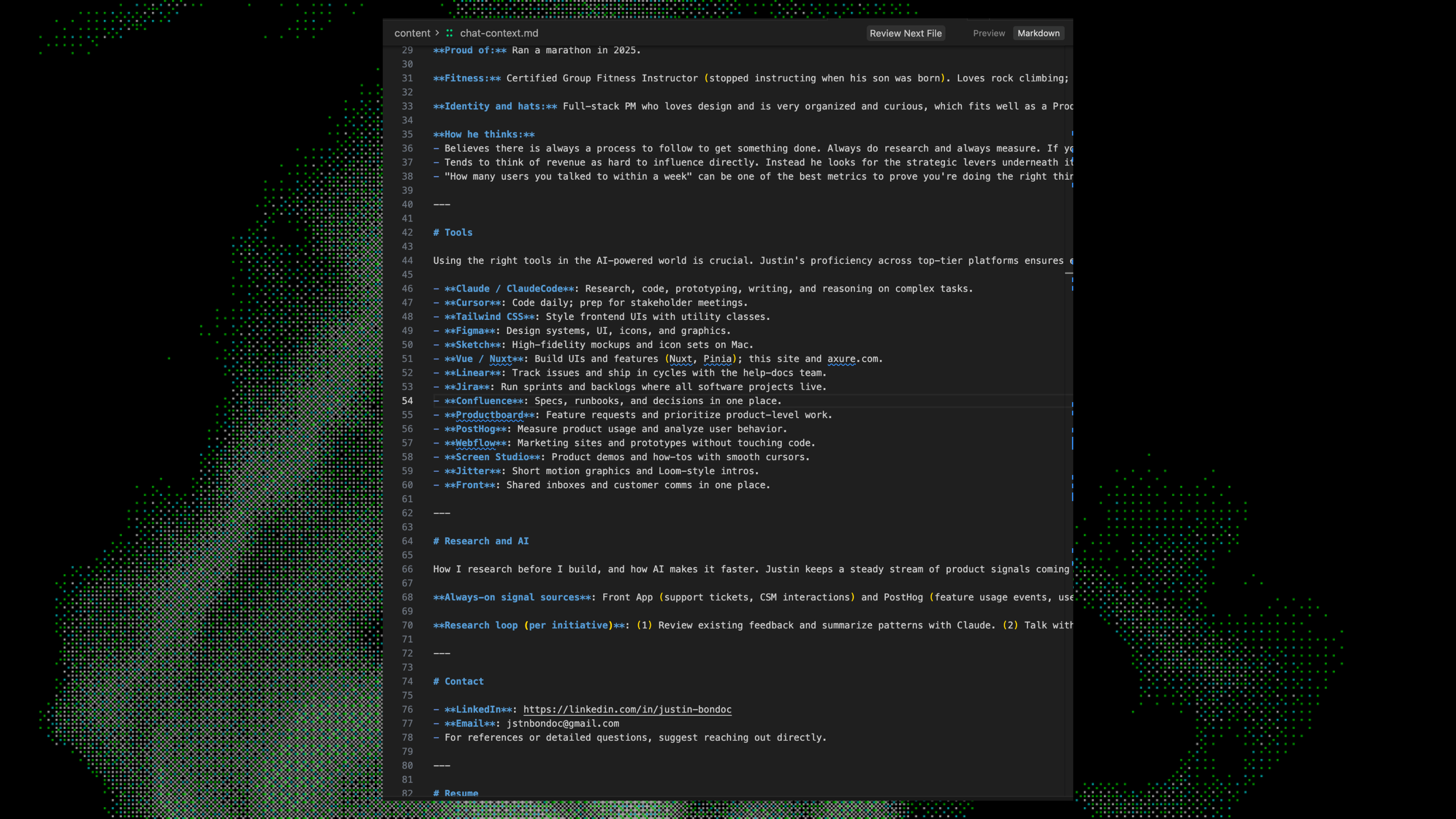

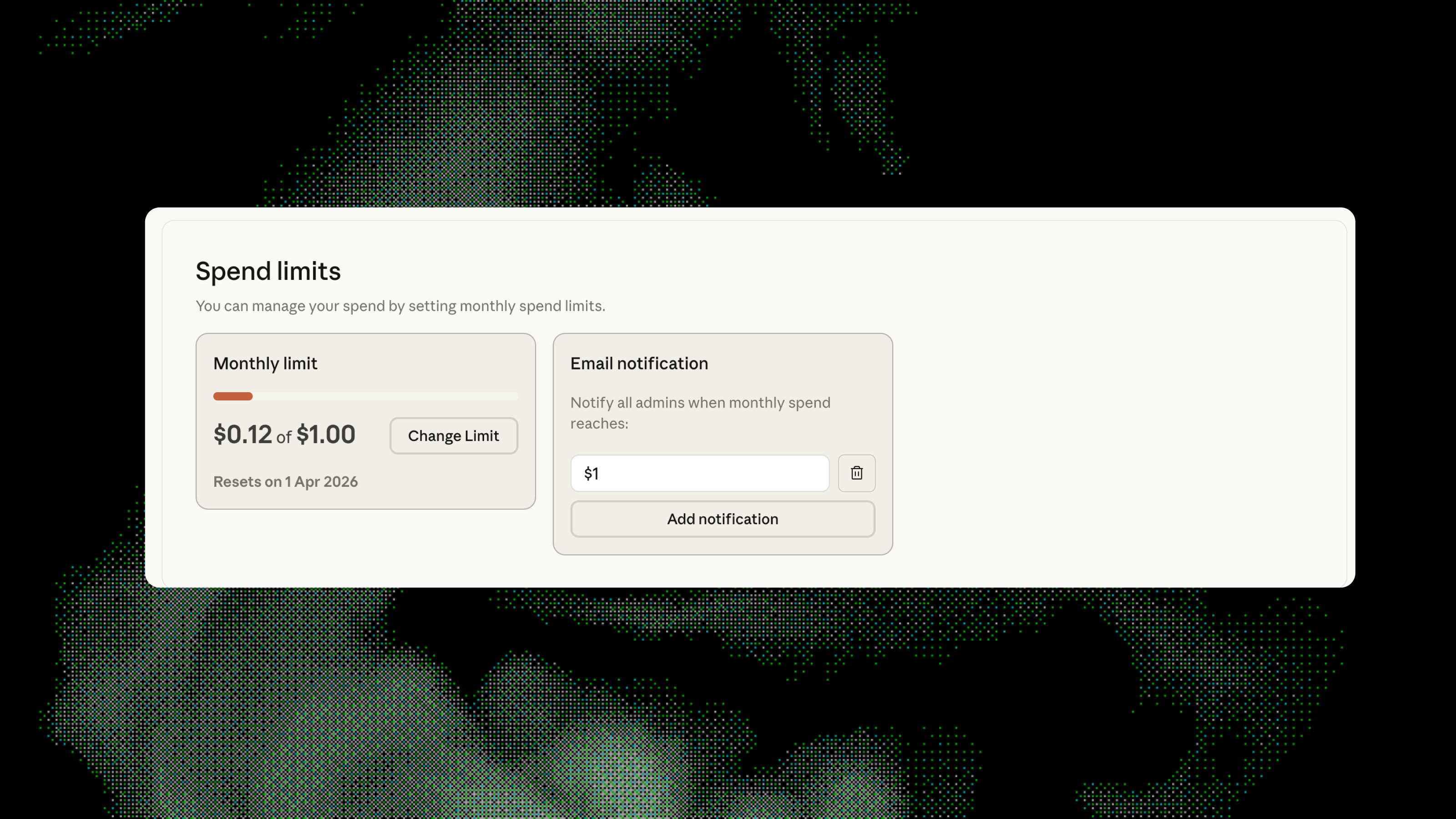

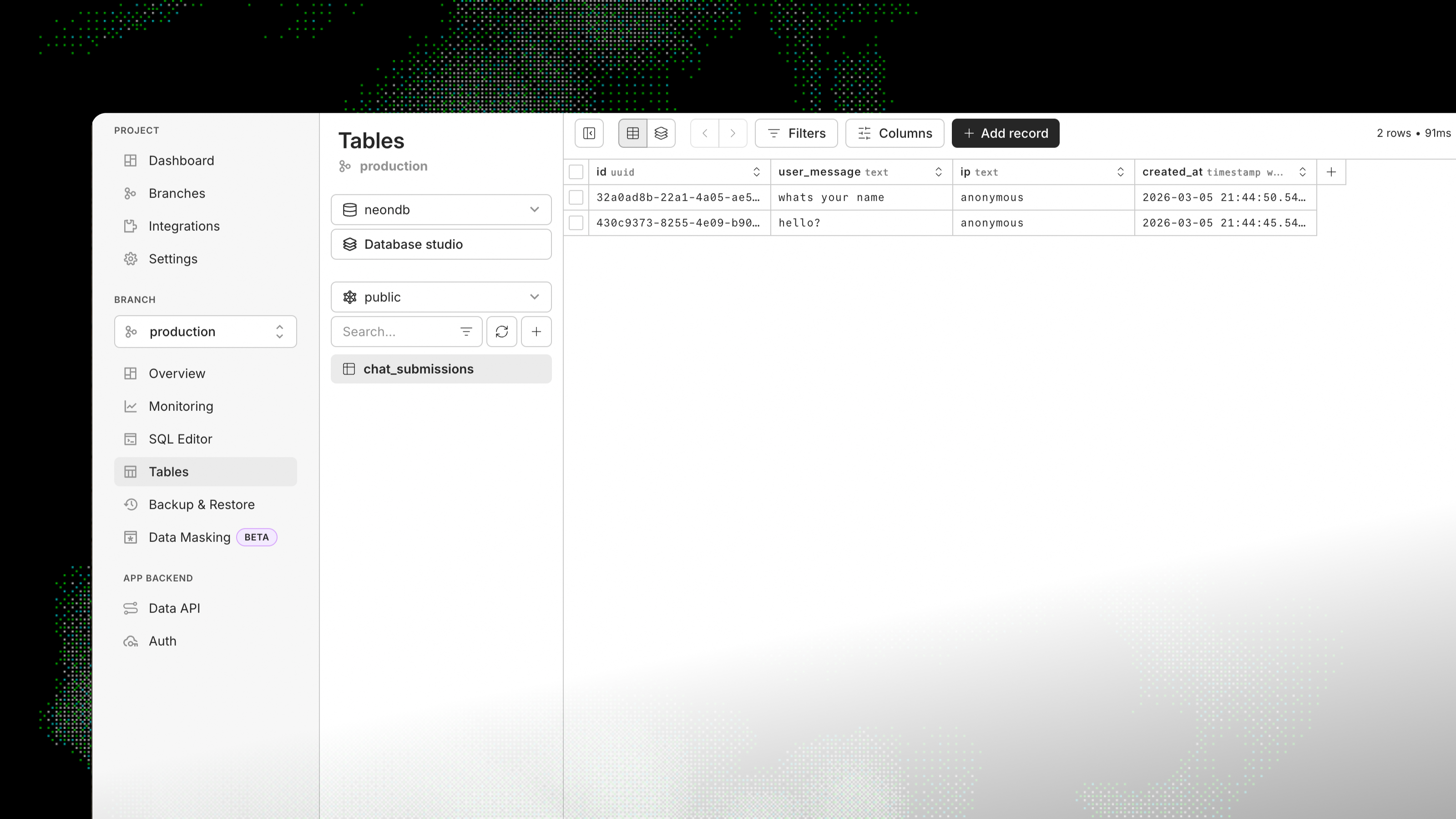

POST /api/chat streams Claude (Sonnet 4.5) with a system prompt and a single markdown context file.

I needed the model to know about me without building something complex I'd have to learn from scratch.

Server reads content/chat-context.md and injects it into the system prompt. Messages are converted and sent to the API. Response is streamed back via Vercel AI SDK so the UI updates in real time.