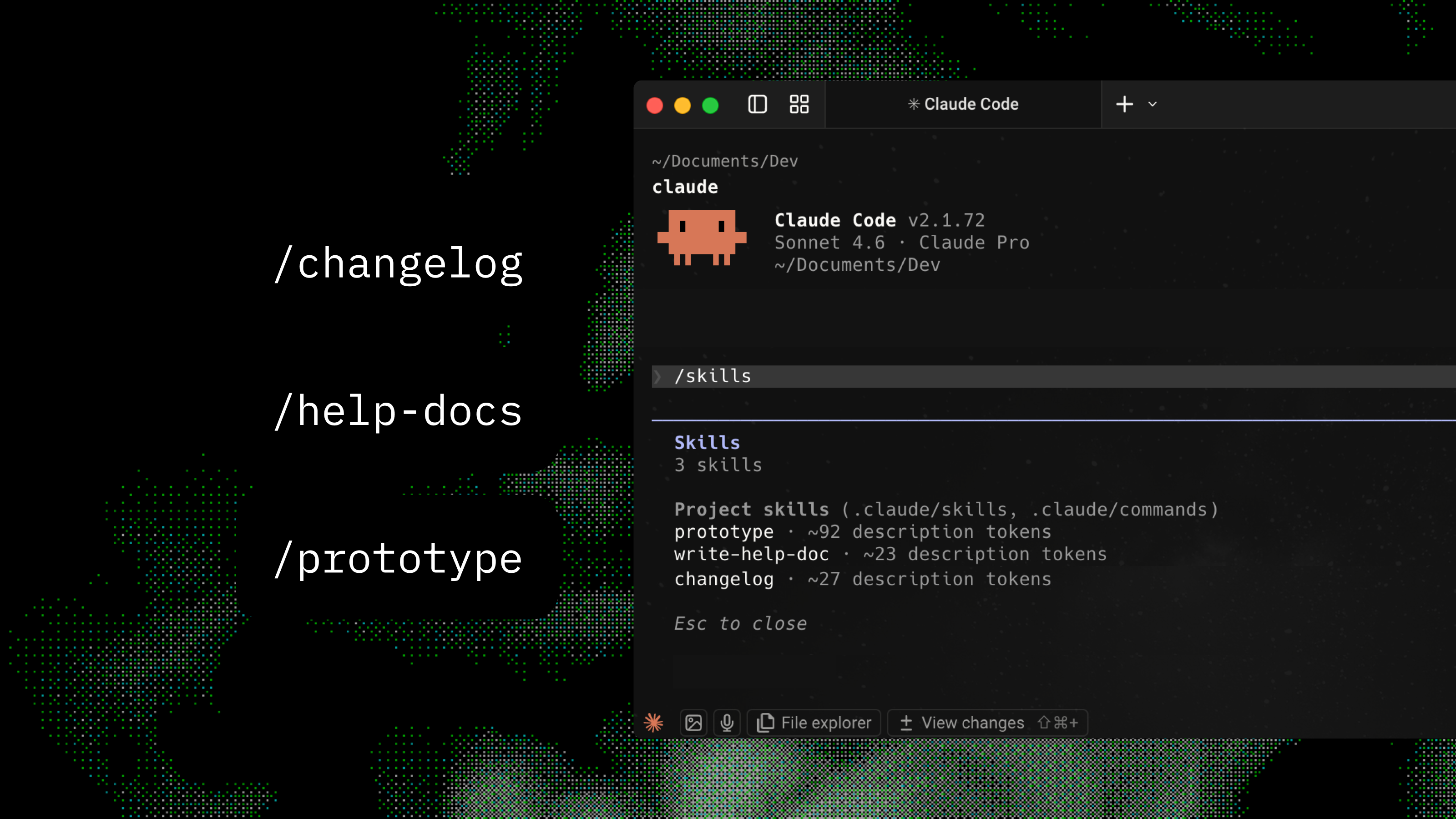

/prototype - three interactive directions from one prompt

Three distinct interactive HTML prototypes from one prompt. No build step.

Exploring UI directions meant wireframing in Axure RP or Figma for hours. Static images. Hard to interact with or iterate on in a discussion.

The skill has a structured planning step before any code: the model defines three directions (name, core idea, trade-off) with a spread of familiar, structural, and experimental. Then it generates each as a standalone HTML file using Vue and Tailwind via CDN. No build step. Real content. Meaningful UI states wired up. Output includes a comparison table and one-line open commands for each direction.

View skill source